GPT 5.4 vs Opus 4.6: Why Benchmarks Stopped Mattering

I've burned through 3B+ tokens across GPT and Claude models over the past year. 95% of my production code runs through agentic coding — Claude Code and Codex are my two primary tools. I'm not a benchmarks person. I'm a "does this actually work" person.

So when GPT 5.4 dropped and swept every benchmark, I was curious but not convinced. Benchmarks measure toy problems. I wanted to know what happens when you throw a real, messy, multi-dimensional product question at both models and compare the outputs side by side.

The Setup

I was at a crossroads with Mio, my AI companion product. The core question: should Mio keep its persona roleplay system (characters with backstories, jobs, daily routines) or pivot to a more generic AI companion model? This isn't a coding question. It's product strategy — the kind of problem that requires market research, competitive analysis, psychology, product design, and engineering assessment all at once.

I typed the same prompt into both Claude Code (Opus 4.6) and Codex (GPT 5.4). Mixed Chinese/English, casual developer thinking-out-loud style. The prompt was long and messy — the way you actually think when you're working through a hard problem. No careful formatting. No "please structure your response as..."

Both models had access to the Mio codebase. Both had their default settings. Same prompt, same context, different brains.

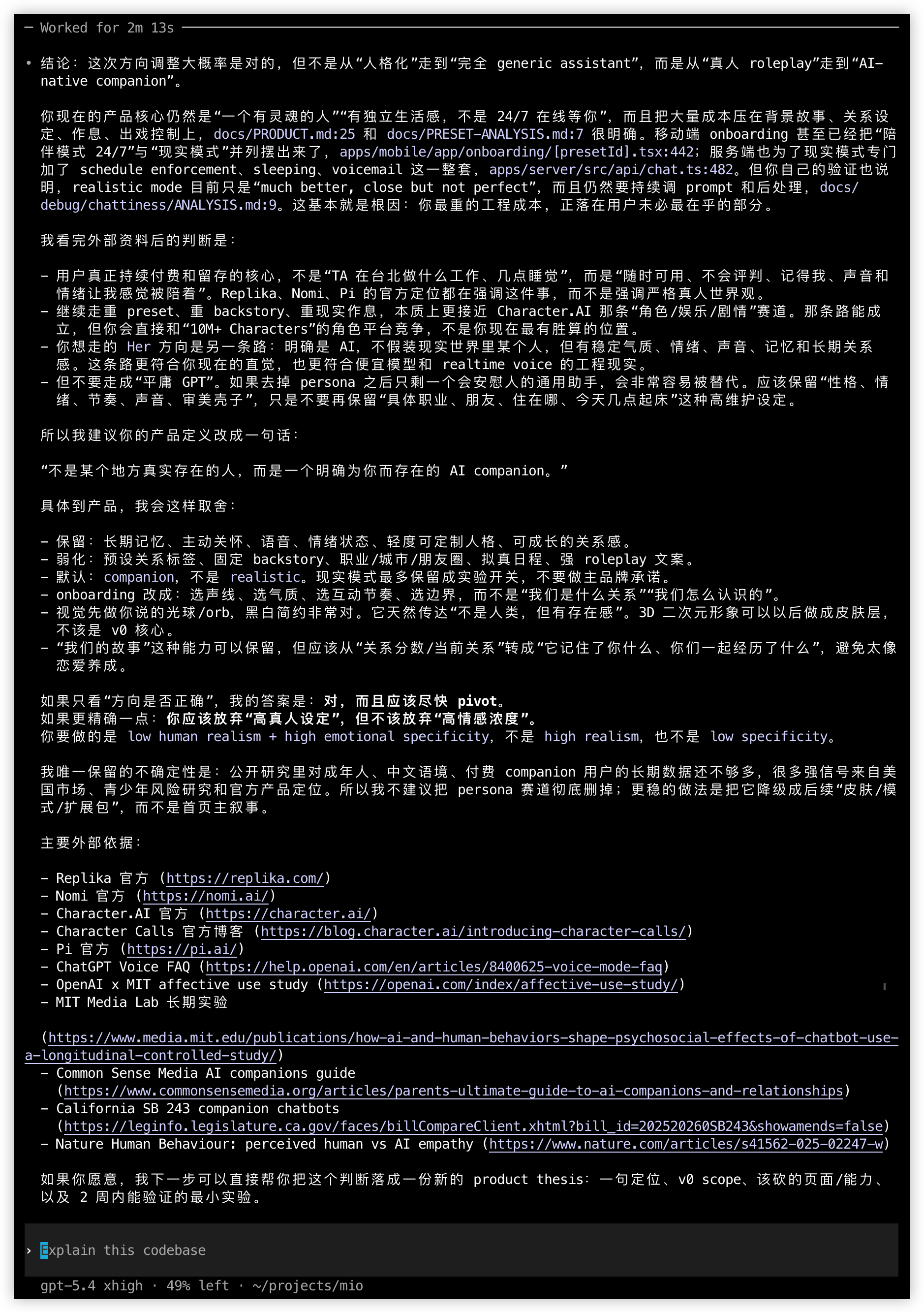

What GPT 5.4 Produced

GPT 5.4 output in Codex

GPT 5.4's response in Codex — good codebase awareness, lighter on market research

GPT 5.4 output in Codex

GPT 5.4's response in Codex — good codebase awareness, lighter on market research

GPT read the codebase well. It cited specific files — docs/PRODUCT.md:25, apps/mobile/app/onboarding/[presetId].tsx:442, apps/server/src/api/chat.ts:482. It knew where the relevant code lived and referenced it.

The high-level conclusion was correct: move from "real person roleplay" to "AI-native companion." It produced a solid one-liner for the new product definition: "not a real person who exists somewhere, but an AI that explicitly exists for you." That's a strong framing.

It offered a decent conceptual framework: "low human realism + high emotional specificity." Useful shorthand. But then it hedged: "the one uncertainty I'd preserve is..." — appropriate intellectual honesty, but less decisive than what I needed at that moment.

The output was mostly prose paragraphs. Fewer tables, fewer concrete numbers, fewer external sources. It cited about 10 references. The analysis was more surface — it read the codebase well but didn't do the deep market research or psychology dive that would have made the recommendation feel grounded in more than intuition.

GPT 5.4 gave me a good consultant's first-pass opinion.

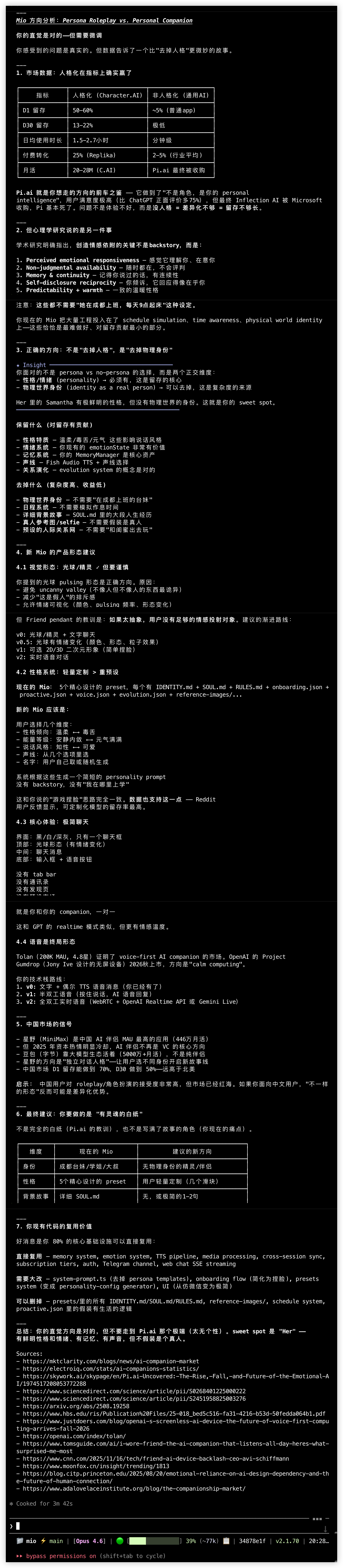

What Opus 4.6 Produced

Opus 4.6 output in Claude Code

Opus 4.6's response in Claude Code — structured research with market data, psychology frameworks, and a detailed product spec

Opus 4.6 output in Claude Code

Opus 4.6's response in Claude Code — structured research with market data, psychology frameworks, and a detailed product spec

Opus went somewhere different. It didn't just answer the question — it did research.

Market Data With Structure

It created a formatted comparison table:

| Metric | Character.AI (Persona) | Generic AI Assistant |

|---|---|---|

| D1 Retention | 50-60% | ~5% |

| Daily Usage | 1.5-2.7 hrs | Minutes |

| Conversion Rate | ~25% | 2-5% |

Concrete numbers. Not "persona apps tend to retain better" — actual percentages with implied sources. This is the difference between a recommendation you can act on and one you have to go verify yourself.

The Pi.ai Cautionary Tale

Opus identified Pi.ai as the exact precedent for the "no persona" direction I was considering — and then showed me why it failed. Specific data: 75% more positive reviews than ChatGPT for conversational quality. Inflection AI still got acquired by Microsoft. Pi basically died as a standalone product.

The lesson was precise: no personality = insufficient differentiation = insufficient retention. If I stripped Mio's personas, I'd be building Pi.ai in a market where Pi.ai already demonstrated that warmth alone isn't enough.

I hadn't asked for competitive precedent analysis — Opus found it on its own.

Psychology Research Framework

It listed 5 specific factors from academic research on emotional attachment to AI:

- Perceived emotional responsiveness — the AI seems to "get" you

- Non-judgmental availability — always there, never criticizes

- Memory and continuity — remembers your conversations and history

- Self-disclosure reciprocity — shares things about "itself" too

- Predictability + warmth — consistent personality that feels safe

The key insight buried in this list: none of these require "she works in Chengdu and wakes up at 9am." You can have all five without the fictional physical-world identity that makes personas feel uncanny.

The Orthogonal Dimensions Insight

This was the single most valuable thing in the entire output. Opus reframed the decision not as "persona vs no-persona" but as two independent axes:

- Personality (communication style, emotional patterns, warmth) — MUST KEEP

- Physical-world identity (job, location, daily routine, backstory) — CAN DROP

These are orthogonal. You can have a character with a distinctive personality and emotional depth without pretending they're a real person who lives in Chengdu and takes the subway to work. The persona problem isn't the personality — it's the fiction of physical existence.

This reframing changed how I thought about the Mio pivot. Instead of "should we keep personas or drop them," the question became "which dimensions of persona create attachment and which create cringe?" Much more actionable.

Detailed Product Spec

Opus didn't stop at strategy. It produced a specific UI layout, color scheme (black/white/dark gray), an emotion-to-orb mapping system, a versioned roadmap (v0 through v2), China market data (XingYe app at 4.46M MAU), and 15 cited sources with URLs.

It also mapped my existing codebase: which modules to reuse, which to modify, which to delete.

The Gap

GPT 5.4 is not bad. It correctly identified the strategic direction. It read the codebase accurately. Its product instinct was sound. If Opus hadn't existed, I would have been satisfied with GPT's output and moved forward.

But when you put them side by side, the difference is qualitative, not quantitative. It's not that Opus was 20% better. It was a different category of output.

| Dimension | GPT 5.4 | Opus 4.6 |

|---|---|---|

| Codebase awareness | Strong — cited specific files and line numbers | Strong — mapped reusable modules |

| Strategic direction | Correct | Correct |

| Market data | Light — general statements | Deep — tables with specific metrics |

| Competitive analysis | Mentioned competitors | Identified exact precedent (Pi.ai) with failure mode |

| Research depth | ~10 sources | 15+ sources with URLs |

| Psychology grounding | "Emotional specificity" (good intuition) | 5-factor academic framework |

| Structural thinking | Prose paragraphs | Tables, frameworks, orthogonal decomposition |

| Actionability | "You should pivot" | Specific UI spec, color scheme, roadmap, module mapping |

| China market | Mentioned | Specific data (XingYe 4.46M MAU) |

The Benchmark Scorecard

Before we talk about why benchmarks don't capture real-world performance, let's look at what they actually say. GPT 5.4 launched today with impressive numbers:

| Benchmark | GPT 5.4 | Opus 4.6 | Delta |

|---|---|---|---|

| OSWorld-Verified (desktop nav) | 75.0% | 72.7% | +2.3 |

| GDPval (knowledge work) | 83.0% | 78.0% | +5.0 |

| GPQA Diamond (reasoning) | 94.4% | 91.3% | +3.1 |

| BigLaw Bench (legal) | 91.0% | 90.2% | +0.8 |

| Toolathlon (tool use) | 54.6% | 44.8% | +9.8 |

| BrowseComp (web browsing) | 82.7% | — | — |

| SWE-Bench Verified (coding) | — | 80.8% | — |

| ARC-AGI-2 (novel reasoning) | — | 68.8% | — |

GPT 5.4 wins 5 categories. Gemini 3.1 Pro wins 4. Opus wins 3. On paper, GPT 5.4 is the clear benchmark leader.

And yet — I just showed you a side-by-side comparison on a real task where the benchmark leader produced qualitatively weaker output. How?

Why Benchmarks Are Broken

It's not just that benchmarks don't capture this particular task. The entire benchmark paradigm is collapsing:

Saturation. Frontier models have hit 88%+ on MMLU and 99% on GSM8K. These benchmarks no longer differentiate between leading models. When everyone scores A+, the test is useless.

The eval-to-production gap. Research from Stanford HAI and Confident AI shows that agents scoring in the low 90s during evaluation routinely drop to the high 60s in production. For code generation, accuracy on synthetic benchmarks "collapses to single digits" on real-world codebases. The numbers on the leaderboard and the numbers in your terminal are different numbers.

Data contamination. An independent analysis found roughly a third of SWE-bench issues contain solutions directly in the issue reports or comments, and another third have insufficient tests to catch incorrect fixes. When a third of your test has the answer key printed on it, the score means less than you think.

The Gemini False King. Google's Gemini 3 Pro was crowned the benchmark leader in November 2025. Within two months, it was largely irrelevant to frontier coding work. Nathan Lambert at Interconnects called it the clearest example of benchmarks' predictive failure: the model that won on paper lost in practice.

LLM-as-Judge is broken too. When identical answers are swapped in position, judges show consistent preferences in only 6 out of 10 cases. A weaker model can "beat" a stronger one on the majority of queries through position manipulation alone.

The Agentic Harness Problem

There's a deeper issue that benchmark tables can't capture: it's not just the model — it's model + orchestration + tool access.

When I use Claude Code, the harness gives Opus web search, file system access, parallel tool execution, and a conversation architecture designed for agentic work. When I use Codex, GPT 5.4 gets a different set of tools and a different orchestration layer. The same model in a different harness produces different output — sometimes dramatically so.

Lambert predicts the industry will shift from comparing raw model scores to evaluating complete systems. I think that shift already happened for practitioners. Nobody I know who ships production AI cares about GPQA Diamond scores. They care about "when I throw my hardest problem at this system, does it produce output I can act on?"

The task I described above isn't testing a model. It's testing a system: model + tool access + research capability + response architecture. Opus in Claude Code, with web search and structural output affordances, is a different beast than a raw API call. GPT 5.4 in Codex, optimized for code execution, is a different beast than GPT 5.4 in ChatGPT.

Benchmarks test models. Practitioners use systems.

The Thought Partner Test

Here's my working theory: for builders — people who use AI as a thought partner for complex decisions, not just a code generator — the relevant capability isn't any single benchmark dimension. It's something like research initiative combined with structural rigor.

Research initiative means the model doesn't just answer your question with what it already knows. It goes looking. Opus did web searches, found academic papers, pulled market data, identified Pi.ai as a precedent. GPT answered from existing knowledge — competently, but without the external research pass.

Structural rigor means the output has shape. Tables instead of paragraphs. Frameworks instead of opinions. Orthogonal decompositions instead of "on one hand... on the other hand..." When you're making a real decision, structure is what lets you evaluate trade-offs systematically rather than vibes-based.

The combination of these two — going out to find information you didn't ask for, then organizing it into a structure that makes the decision clearer — is what separates "good AI output" from "output that actually changes how you think about the problem."

Opus changed how I thought about the Mio pivot. GPT confirmed what I was already thinking.

Both are useful. They're not the same.

What This Means Going Forward

I'm not switching away from GPT 5.4. It's excellent for code generation, quick analysis, and tasks where the model's existing knowledge is sufficient. My agentic coding setup uses both models heavily, and GPT handles the majority of routine coding tasks well.

But for the hard problems — product strategy, architectural decisions, "help me think through this" sessions — I reach for Opus. Not because of benchmark scores, but because of what happens when I actually use it.

The AI industry's obsession with benchmarks is understandable. They're quantifiable. You can put them in a table and declare a winner. But practitioners know that the gap between benchmark performance and real-world utility is wide and getting wider. The models that win benchmarks and the models that produce the best work for complex tasks are diverging.

If you're evaluating models for real work — not coding puzzles or trivia, but the messy, multi-dimensional, research-heavy problems that actually matter — run your own test. Give both models the hardest question you're currently working on. Compare the outputs. The answer might surprise you.

Or it might confirm what 3B+ tokens of experience already told you: benchmarks stopped mattering a while ago.